Start with our AI Readiness Check

AI is already part of your child’s learning. In just a couple of minutes, discover where your family stands and what to do next.

- ✓ Your family’s AI Confidence Score

- ✓ What you’re already doing well

- ✓ Simple, practical next steps

AI is becoming part of many children’s digital experiences, from learning apps to chat features, recommendation tools, creative tools, and possibly more toys over time.

A few years ago, “AI-powered” was often advertised as a high-tech benefit. Now, as more people ask questions about privacy, accuracy, safety, and screen habits, AI may not always be promoted so clearly.

That does not mean AI is bad. It means parents need a little AI literacy so they can spot where it might be used and make better choices for their children.

Challenge

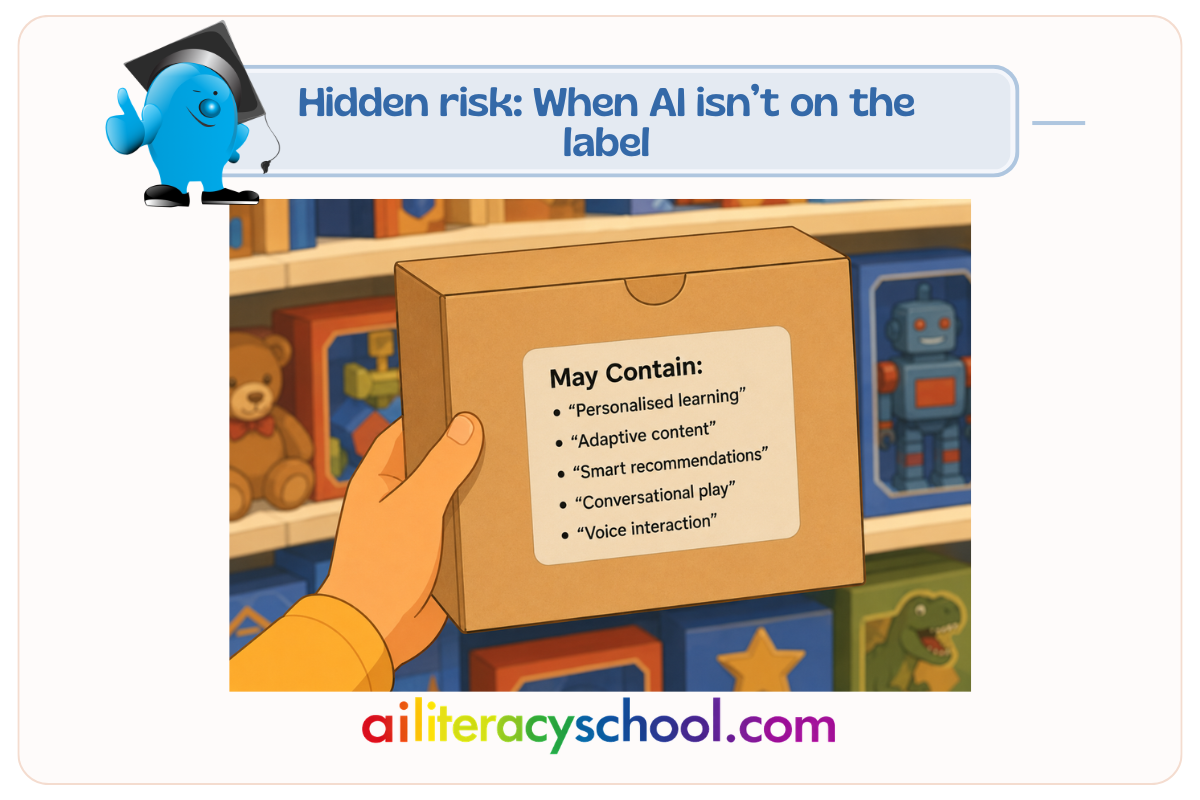

Parents often look for obvious words like “AI,” “artificial intelligence,” or “chatbot.” But AI can sit behind other phrases that sound more ordinary.

An app or toy might talk about being “personalised,” “adaptive,” “smart,” “responsive,” or “interactive.” These features can be helpful, especially when they support practice, creativity, or accessibility. But they may also mean the product is collecting information, making decisions, generating content, or responding to your child in ways that are not always easy to see.

The challenge is not to avoid every tool that uses AI. The challenge is to understand what the tool is doing, what information it uses, and whether your child is using it in a safe, age-appropriate way.

How this might show up in real life

An educational app might say it “adapts to your child’s level.” This could mean it changes questions based on answers, recommends new lessons, or uses data about your child’s progress.

A toy might be described as “smart,” “conversational,” or “able to respond.” This could mean it uses voice recognition, online processing, generated replies, or stored interaction data.

A creativity app might offer “instant story ideas,” “automatic feedback,” or “personalised suggestions.” This could involve AI generating text, images, hints, or corrections.

Try saying

“Let’s look at what this app does before we use it. Does it just give activities, or does it respond to you?”

“AI can be useful, but we still need to check what it says and decide what information we share.”

“If something feels like it is chatting with you, giving advice, or asking personal questions, come and show me.”

Talk About It

- What does this app or toy seem to know about you?

- Does it give the same thing to everyone, or does it change based on what you do?

- Is it helping you practise, or is it doing the thinking for you?

- Does it ever ask for your name, voice, photo, location, school, or feelings?

- Would you feel comfortable showing me what it said or asked?

- How could we check whether its answer is right?

Tip for Parents

Look beyond the word “AI.” Check for clues such as:

- “Personalised learning”

- “Adaptive content”

- “Smart recommendations”

- “Conversational play”

- “Voice interaction”

- “Instant feedback”

- “Generated stories, images, answers, or hints”

- “Learns with your child”

- “Powered by data”

- “Interactive companion”

These phrases do not automatically mean a product is unsafe. They are simply signals to pause and ask a few useful questions.

What data does it collect? Does it need an account? Can it be used offline? Are conversations stored? Can parents control settings? Is there a clear privacy policy? Can your child easily understand when they are interacting with a machine?

Why This Matters

AI can support learning when it is used well. It can help children practise, explore ideas, get feedback, and access content in different ways.

But children are still learning how to judge information, protect privacy, understand persuasion, and separate real people from responsive technology. A child may not know whether a “smart” toy is storing their voice, whether a chatbot is making things up, or whether a personalised app is shaping what they see next.

That is why the goal is not fear. The goal is awareness.

AI-literate parents are better able to choose tools, set boundaries, ask good questions, and help children use technology with confidence. When parents stay involved, AI can become something children learn to question, not something they simply trust.

Quick Tip

Before your child uses a new app or connected toy, ask: “Does this just respond, or does it learn, generate, recommend, or remember?”

For more details on AI Companions, including how ChatGPT can act as a one see AI Companions and Kids: A Parent’s Guide.

Understand the risks of AI on real-life relationships with: AI Risk for Kids: When AI Starts to Crowd Out Real Friends

Parent Conversation Guide

A short guide to help parents start calm, confident conversations about AI use at home.